Building /today

tl;dr — get your own and experience what this article is about

It started the way everything dangerous starts. With a small, reasonable request.

I was sitting in my office one morning in March, staring at a calendar that looked like someone had dropped a bag of Skittles on a grid. Six meetings. Three Zoom links I had to hunt down. An inbox full of higher ed news I should have read before the first call but wouldn't because I was already behind. And a vague sense of dread that somewhere in the day's schedule was a conversation I was unprepared for, and I wouldn't know which one until it was happening.

So I opened Claude and typed something like: Can you read my calendar for today and give me a quick briefing?

That was it. That was the whole vision. Fetch the calendar, pull some news headlines, tell me what my day looks like so I can drink my coffee like a human being instead of a hostage negotiator defusing my own schedule. A small automation. The kind of thing you build in an afternoon and forget about by Thursday.

That was five weeks ago. I have not forgotten about it by any Thursday.

What happened instead is that Claude and I built something together that I now can't imagine working without. And the story of how it got there is really about one shift: I stopped treating AI as a tool I instruct and started treating it as a collaborator I think alongside. That distinction will sound soft to you. It isn't.

But I need to back up, because the decision that made everything possible happened before I typed that first prompt. I had to give something up first.

I closed the apps. All of them. The dashboards, the notification panels, the project management tools with their swimlanes and Gantt charts and seventeen different views of the same information. I reduced my entire interaction with the computer down to a terminal window and a blinking cursor. A black screen. A prompt. That's it. Thirty years of building software and I voluntarily walked back to the command line like some kind of digital ascetic.

Everyone around me thought I'd lost my mind. We've spent decades building castles of UX. Beautiful interfaces. Pixel-perfect buttons. Drag-and-drop workflows. The whole software industry is built on the assumption that humans need to be guided through their interactions with machines, that the experience has to be designed for them, anticipated, pre-built, every possible action laid out like a buffet so you never have to say what you want. Just point and click. We made computers easier to use by making humans less precise about what they needed.

I went the other direction. I stripped it down to the intelligence and my ability to talk to it. That's it. No buttons to limit the request to what some product designer imagined I might want. Just me, describing what I need in plain English to something that can do it. And here's the part I didn't expect: the constraint became the gift. When your only interface is language, you have to think about what you want before you open your mouth. When was the last time a dropdown menu made you do that?

This is the thing nobody in tech wants to say out loud: the interface is the bottleneck. Every modal, every carefully designed user flow is a cage. It shows you what someone else decided you should be able to do. A terminal with an AI on the other end shows you what you can think to ask for. The ceiling moved. It's not the technology. It's your willingness to be specific.

So when I sat down that morning and typed my little calendar request, I wasn't using an app. I was having a conversation. And that changes everything about what happens next.

The first version did exactly what I asked. It pulled my Google Calendar, listed the meetings, noted who was in them, and surfaced the Zoom links so I didn't have to click through four different invites. It worked. It was useful the way a drawer organizer is useful. It saved me four minutes. I was underwhelmed in the exact way you should be underwhelmed by version one of anything.

But here's what happens when your interface is a conversation instead of a product. The conversation doesn't end when the output appears. There's no "done" state. No screen to close. You look at it. You think. And then you say the thing that no button ever gave you a way to say: What if it also...

What if it also pulled the news? Not all the news. My news. Higher education, workforce policy, credential reform, the specific policy world I operate in every day. What if it checked a handful of sources I actually trust and surfaced what's relevant to the things I'm working on right now? Not a feed. Not an aggregator. A filter with judgment.

So we added that. I gave Claude a list of sources. Inside Higher Ed, The Chronicle, Higher Ed Dive, a few Substacks I read when I have time, which is never save for Beth Rudden's and Julia Freeland Fisher's prose. Claude fetched them, read them, and told me which ones mattered for my world. Which stories connected to my meetings, my products, my week. Not what was trending. What was relevant.

And then something happened that I want to be precise about, because this is the moment the project changed. Claude surfaced a story about transfer credit loss, 43% of community college credits lost when students transfer. But instead of listing it as a headline, it connected it to my 1:30 meeting. It told me this story was relevant to KreditFlow, one of the products we're building, and that the Future Force call that afternoon was the right place to bring it up. It didn't just fetch the information. It understood the context of my day and told me where the information belonged in it.

I hadn't asked for that. I want to be clear about this. I did not sit down and write a requirements document that said "cross-reference news stories with calendar events and map them to product strategy." Nobody designed that feature. It emerged because I'd been having an ongoing conversation with Claude about what I was building and why. Claude had the context. When I gave it the information, it did what any good colleague would do. It connected the dots.

That's when I stopped thinking of this as a script and started thinking of it as a briefing.

A script runs. A briefing thinks. I've been in rooms my whole career where the person who won wasn't the smartest or the most senior. They were the most prepared. They walked in knowing the one thing everyone else hadn't read yet. They had the number, the name, the context that made everyone else look up from their laptops. The briefing started giving me that. Every morning.

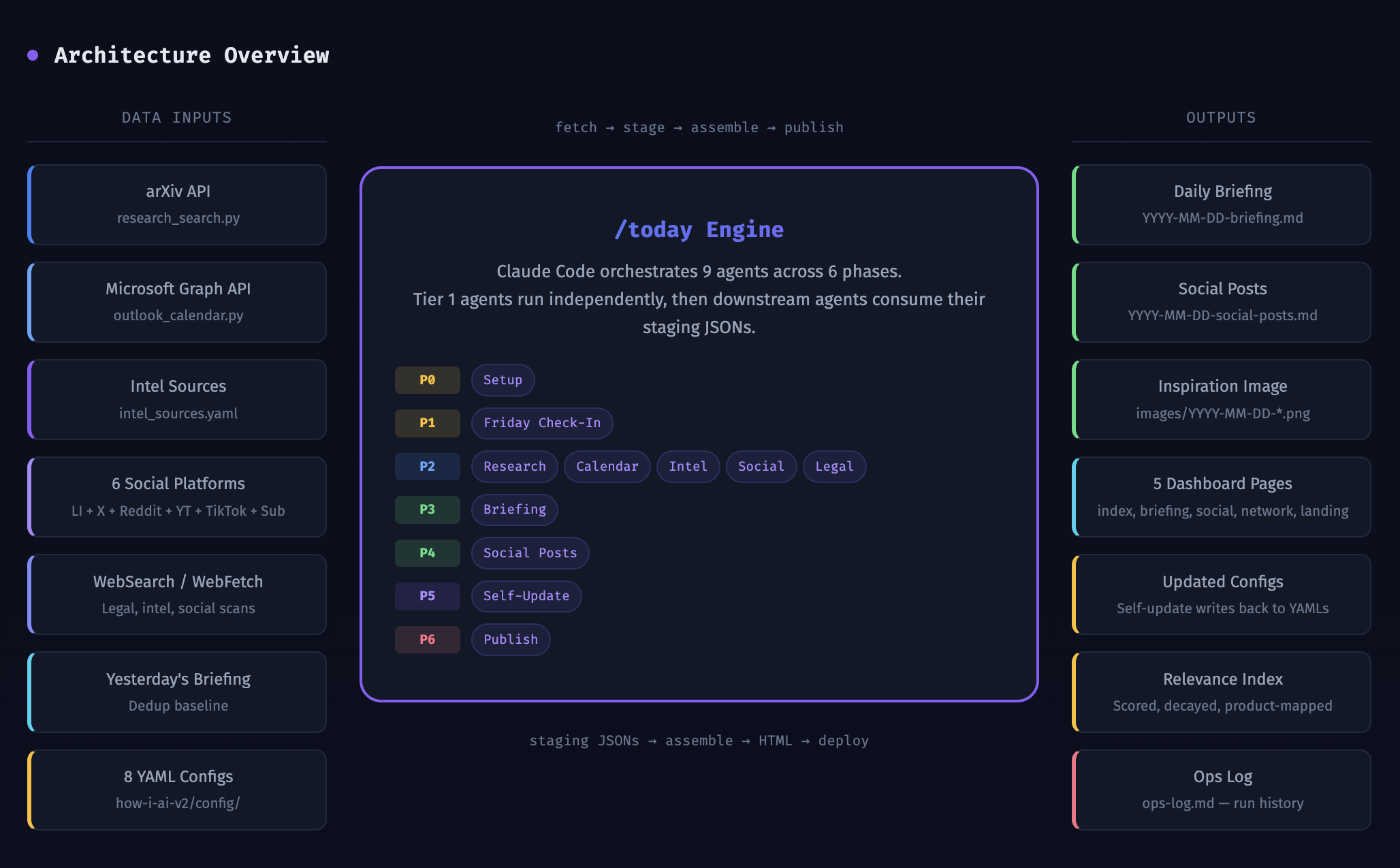

We kept going. Of course we kept going. Each morning I'd look at the output and see the next question. What if it tracked which sources were actually producing useful intel and which ones were noise? We built a scoring system. Every source gets a relevance score with a seven-day half-life decay. If a source keeps surfacing stories that connect to my work, its score goes up. If it's producing junk, the score decays. After four consecutive misses, the system auto-pauses it and goes looking for a replacement. I didn't design that system in advance. I watched the briefing produce noise from a source for three days in a row, and I said to Claude, this one's wasting our time, and it'll happen again. Can we make the system learn that?

We could. We did.

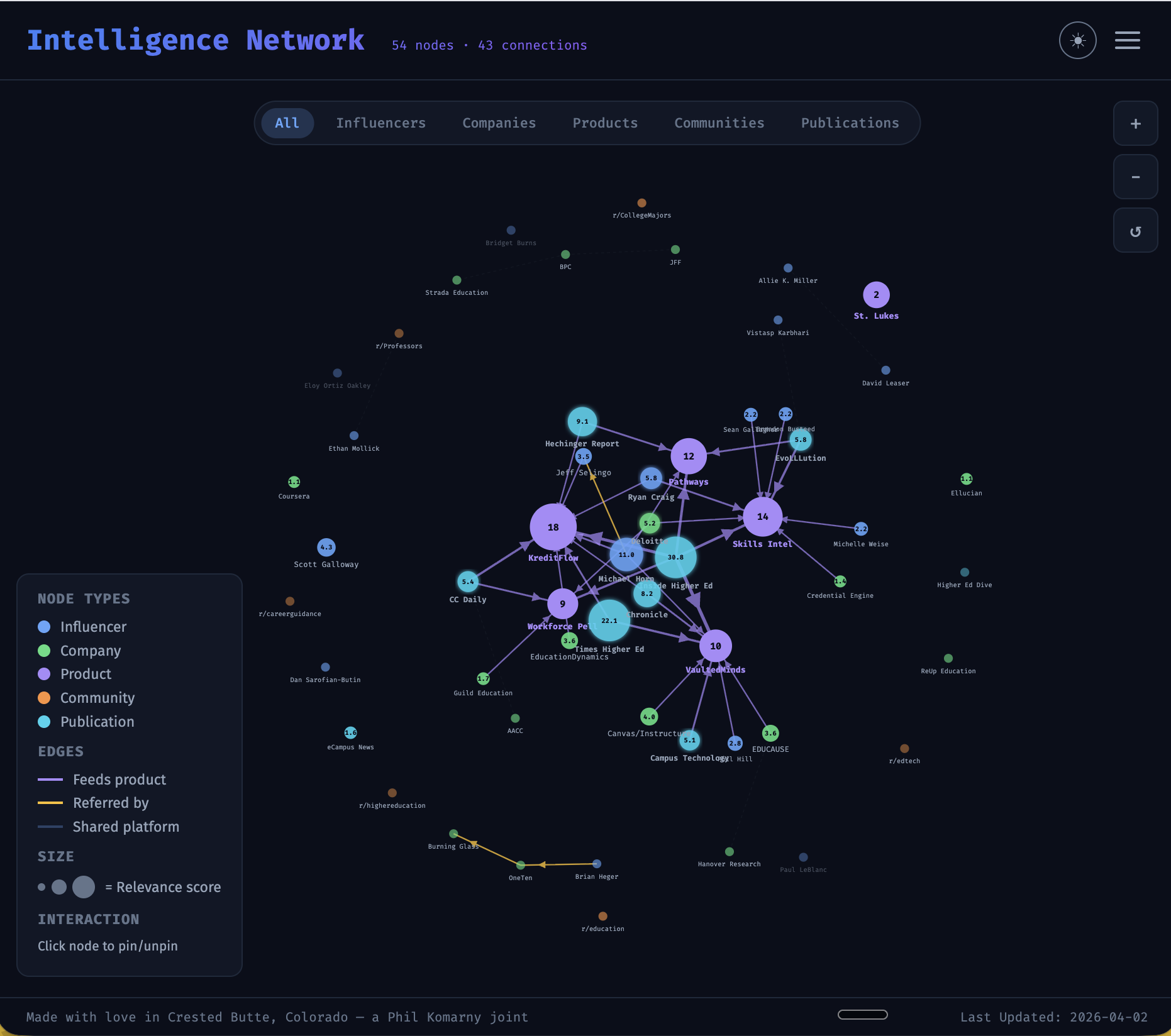

Then we added social monitoring. Not the dystopian corporate listening post version. I mean tracking the handful of people in higher ed whose thinking I care about. Michael Horn, Ryan Craig, Phil Hill. What are they talking about this week? Does it intersect with anything in my briefing? We built convergence detection. When a news story and a social post and a research paper all point at the same thing, the system flags it. Three independent signals pointing the same direction isn't a coincidence. That's a wave forming. See it on Tuesday, you can ride it. See it on Friday, you're reacting.

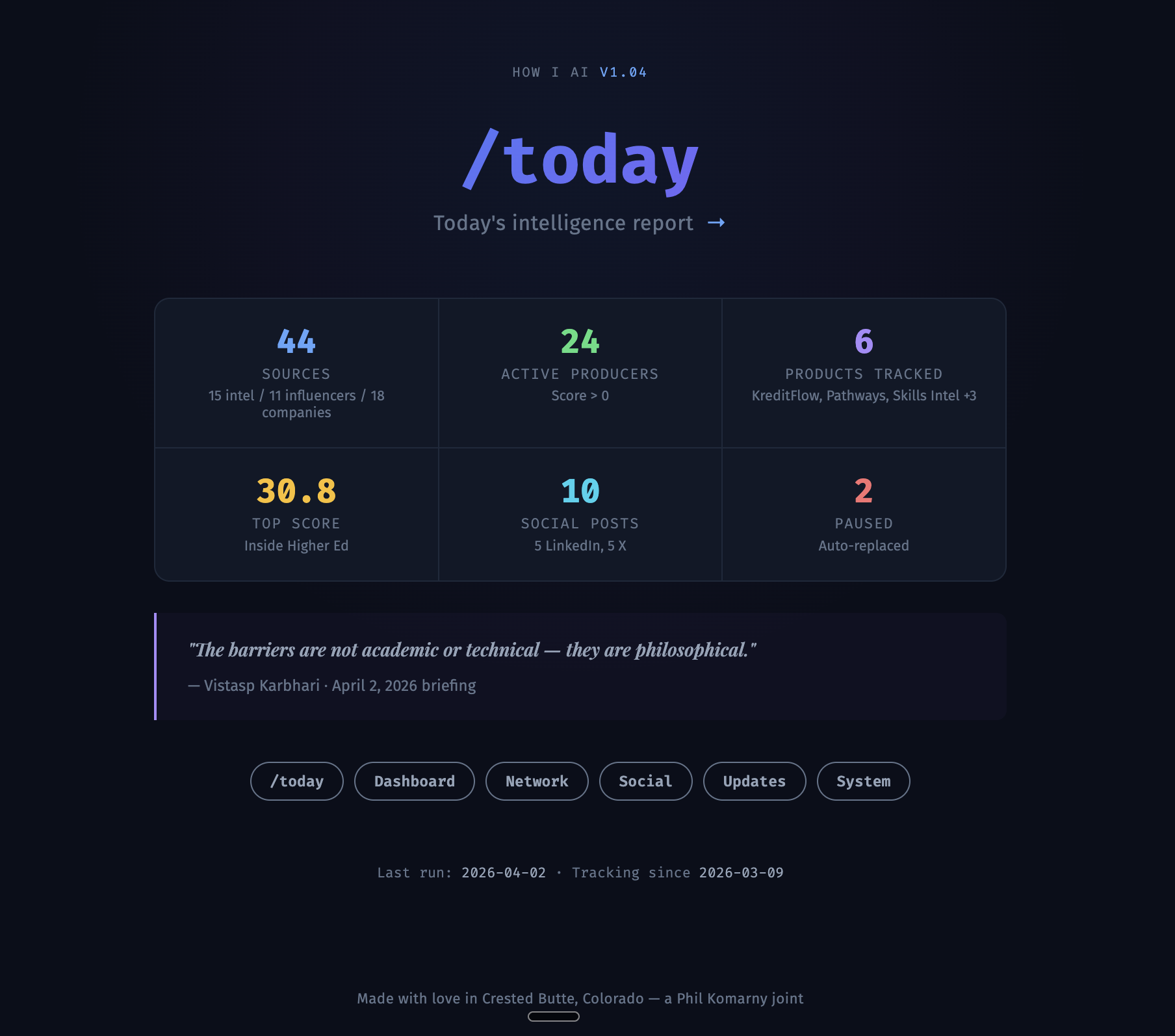

The system now monitors fifteen intel sources across six platforms. Eleven influencers. Seventeen companies. Reddit communities, YouTube channels, and, God help me, TikTok, because that's where the student voice lives and ignoring it is a choice with consequences. It scores everything. It heals itself. When a source throws a 403 error, it finds a replacement and swaps it in. I woke up one morning and the briefing had replaced University Business with eCampus News overnight because UB had changed their site structure. The system noticed before I did.

But the part that still gets me is the SITREP.

Every briefing opens with a situational report. Three to four sentences. A synthesis of what's happening in my world today, what's converging, where the pressure is building, and the one thing I need to walk into my first meeting already knowing. This morning's SITREP told me the transfer credit crisis, program cuts accelerating across three states, and the Workforce Pell comment deadline in six days were all running through the same product. It told me which of my six meetings were the right venues to act on that convergence. Before I'd finished my coffee.

I didn't write the SITREP logic. I shaped it. I told Claude when it was too generic, too cautious, when it was burying the lead. But the format, the way it connects the big picture to the personal to the tactical? That came from the back and forth. Weeks of Claude watching me react to its output and adjusting. Me saying no, don't tell me what happened, tell me what it means for today. Claude hearing the difference.

The whole thing renders as a dashboard now. Dark mode, naturally, because I'm not an animal. There's a daily quote that Claude picks based on the day's themes, not from some inspirational poster database but from something it read that morning that connects to the substance of the briefing. Schedule with Zoom links. Focus recommendations ranked by urgency. Intel cards grouped by domain. Product tags on every story so I can see at a glance which parts of the business each piece of intelligence touches.

It generates its own improvement suggestions. Weekly. A markdown file that tells me what's working, what's broken, where the blind spots are. Last week it told me that St. Luke's Academy, one of our six named partnerships, had zero source coverage. No intel source was tagged to it. No stories were surfacing. A blank spot in the map that I hadn't noticed. The system noticed for me.

Then I shared it.

I zipped a folder, dropped it in Slack, and told a colleague to open Claude in the Obsidian sidebar. That's the whole installation. A zip file and a markdown file. The markdown is the brain. It holds the instructions, the logic, the personality of the whole system. Copy it into your vault and /today is yours.

But here's what makes this different from every piece of software I've ever handed someone: it doesn't start by running. It starts by asking. The first thing Claude does is interview you. Who are you? What do you do? What are you working on? What sources matter in your world? What does a good day look like for you? Because /today built for me would be useless for my colleague. He doesn't care about transfer credit policy. He's got his own world, his own problems. Claude needs to know him, not me. So it asks. It listens. It builds his version from scratch, shaped by his answers.

I didn't train him on it. Didn't write documentation. Didn't do the thirty-minute screen share where he pretends to follow along. He got stuck twice. Both times he asked Claude. First time was calendar sync. He uses Outlook, not Google. I'd built the whole thing around Google Calendar and hadn't thought about it for a second. Didn't matter. He told Claude he was on Outlook, and Claude walked him through the connection until his calendar was flowing in. I wasn't on the call. I wasn't in the chat. Claude handled it because Claude understood what /today needed and could figure out how to get there from where this guy was starting.

A zip file, a Slack message, and a ten-minute conversation with an AI. That's all it took. Someone else had a personalized intelligence briefing running from their own vault, tuned to their own work, pulling their own calendar. No developer. No IT ticket. No me.

Look. I know what this sounds like from the outside. Guy builds elaborate AI morning ritual, shares it via zip file, news at eleven. But here's what I've been trying to figure out how to say since the first week this thing started working: this is what building with AI feels like when you do it right. Not a prompt. Not a one-shot. A sustained collaboration where the system gets smarter because you get more honest with it about what you need.

Every morning I open it and something is different. Not because the code changed overnight, but because the world changed and the system noticed. New sources discovered. Old sources dropped. Connections I wouldn't have made myself because I don't have the bandwidth to read fifteen publications before 9 AM, but Claude does. I bring the judgment. It brings the reach. Neither of us could produce this alone.

Five weeks ago I asked an AI to read my calendar. This morning it told me three things were converging in my industry, which of my meetings was the right venue for each one, which product was best positioned to respond, and that a federal comment deadline in six days was a regulatory moment I shouldn't miss. Then it showed me a quote from a university president I'd never heard of that framed the whole day. Then it told me its own source monitoring had a blind spot and suggested how to fix it.

I don't know what to call that. It's not a script. It's not an assistant, exactly. It looks like a dashboard but that's not right either. The closest I can get: it's a collaborator that remembers everything I've told it and shows up every morning having already done the reading.

And it all runs from that black screen. That blinking cursor. That stripped-down terminal I walked back to when everyone thought I was regressing. No app. No interface designed by someone guessing at my needs. Just me, describing my day in plain English to something capable enough to build the experience I described. The dashboard? Beautiful. Dark mode, responsive, product-tagged, source-scored. I didn't drag and drop it into existence. I didn't configure it in a settings panel. I asked for it. In words. Then I asked for it to be better. Then better again. The AI became a companion, a terrifyingly capable one, that can create any experience you can articulate. The only limit is how clearly you can say what you need.

That should scare the software industry and thrill everyone else. We don't need better interfaces. We need better questions. The person who can describe what they need to an intelligence that can build it just skipped the entire product roadmap. They don't need your app. They don't need your twelve-screen onboarding flow. They need a terminal and the willingness to be honest about what their day requires.

That's what we're building. Not for me. For everyone. The vault holds your knowledge. The /today reads it and meets you where you are. Every day it gets a little better because every day you tell it a little more about what matters. Not by clicking. By talking. By being specific. By giving it the one thing no interface ever asked you for: the truth about what you need.

It started with a calendar fetch and a black screen. It became the most useful thing I've built in thirty years. And I built it by opening my mouth and saying what I meant.

It's all in how you ask.

Want to build your own /today? Let's talk or find us in Discord.